YOLOv11融合FFCA-YOLO中的FEM模块以改进小目标检测

创作时间:

作者:

@小白创作中心

YOLOv11融合FFCA-YOLO中的FEM模块以改进小目标检测

引用

CSDN

1.

https://blog.csdn.net/StopAndGoyyy/article/details/143866491

FFCA-YOLO论文概述

论文《FFCA-YOLO for Small Object Detection in Remote Sensing Images》提出了一种针对遥感图像中小目标检测的高效检测器——FFCA-YOLO。该检测器通过引入三个创新模块来解决特征表示不足和背景混淆等问题:

- 特征增强模块(FEM):用于增强局部特征感知能力。

- 特征融合模块(FFM):用于实现多尺度特征融合。

- 空间上下文感知模块(SCAM):用于增强全局关联能力。

此外,为了优化计算效率,论文还提出了基于部分卷积(PConv)的轻量级版本L-FFCA-YOLO。

将FEM模块融入YOLOv11

1. 创建脚本文件

在ultralytics->nn路径下创建blocks.py脚本,用于存放FEM模块的代码。

2. 复制代码

将FEM模块的代码复制到blocks.py中:

import torch

import torch.nn as nn

from ultralytics.nn.modules.conv import Conv

class BasicConv_FFCA(nn.Module):

def __init__(self, in_planes, out_planes, kernel_size, stride=1, padding=0, dilation=1, groups=1, relu=True,

bn=True, bias=False):

super(BasicConv_FFCA, self).__init__()

self.out_channels = out_planes

self.conv = nn.Conv2d(in_planes, out_planes, kernel_size=kernel_size, stride=stride, padding=padding,

dilation=dilation, groups=groups, bias=bias)

self.bn = nn.BatchNorm2d(out_planes, eps=1e-5, momentum=0.01, affine=True) if bn else None

self.relu = nn.ReLU(inplace=True) if relu else None

def forward(self, x):

x = self.conv(x)

if self.bn is not None:

x = self.bn(x)

if self.relu is not None:

x = self.relu(x)

return x

class FEM(nn.Module):

def __init__(self, in_planes, out_planes, stride=1, scale=0.1, map_reduce=8):

super(FEM, self).__init__()

self.scale = scale

self.out_channels = out_planes

inter_planes = in_planes // map_reduce

self.branch0 = nn.Sequential(

BasicConv_FFCA(in_planes, 2 * inter_planes, kernel_size=1, stride=stride),

BasicConv_FFCA(2 * inter_planes, 2 * inter_planes, kernel_size=3, stride=1, padding=1, relu=False)

)

self.branch1 = nn.Sequential(

BasicConv_FFCA(in_planes, inter_planes, kernel_size=1, stride=1),

BasicConv_FFCA(inter_planes, (inter_planes // 2) * 3, kernel_size=(1, 3), stride=stride, padding=(0, 1)),

BasicConv_FFCA((inter_planes // 2) * 3, 2 * inter_planes, kernel_size=(3, 1), stride=stride, padding=(1, 0)),

BasicConv_FFCA(2 * inter_planes, 2 * inter_planes, kernel_size=3, stride=1, padding=5, dilation=5, relu=False)

)

self.branch2 = nn.Sequential(

BasicConv_FFCA(in_planes, inter_planes, kernel_size=1, stride=1),

BasicConv_FFCA(inter_planes, (inter_planes // 2) * 3, kernel_size=(3, 1), stride=stride, padding=(1, 0)),

BasicConv_FFCA((inter_planes // 2) * 3, 2 * inter_planes, kernel_size=(1, 3), stride=stride, padding=(0, 1)),

BasicConv_FFCA(2 * inter_planes, 2 * inter_planes, kernel_size=3, stride=1, padding=5, dilation=5, relu=False)

)

self.ConvLinear = BasicConv_FFCA(6 * inter_planes, out_planes, kernel_size=1, stride=1, relu=False)

self.shortcut = BasicConv_FFCA(in_planes, out_planes, kernel_size=1, stride=stride, relu=False)

self.relu = nn.ReLU(inplace=False)

def forward(self, x):

x0 = self.branch0(x)

x1 = self.branch1(x)

x2 = self.branch2(x)

out = torch.cat((x0, x1, x2), 1)

out = self.ConvLinear(out)

short = self.shortcut(x)

out = out * self.scale + short

out = self.relu(out)

return out

3. 更改task.py文件

在ultralytics->nn->modules->task.py中导入FEM模块:

from ultralytics.nn.blocks import *

在模型解析函数parse_model中添加FEM模块的解析代码:

elif m is FEM:

c2 = args[0]

args = [ch[f], *args]

4. 更改yaml文件

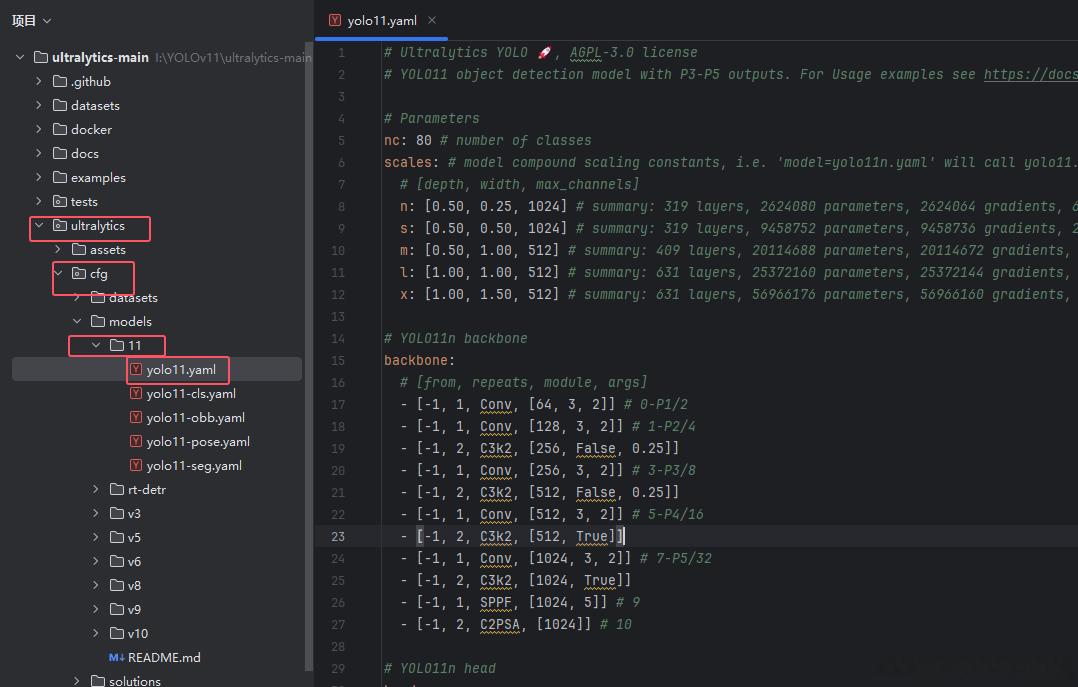

打开ultralytics/cfg/models/11/yolov11.yaml文件,替换原有模块:

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

# YOLO11n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, FEM, [512]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO11n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, False]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5. 修改train.py文件

创建训练脚本:

from ultralytics.models import YOLO

import os

os.environ['KMP_DUPLICATE_LIB_OK'] = 'True'

if __name__ == '__main__':

model = YOLO(model='ultralytics/cfg/models/11/yolo11.yaml')

# model.load('yolov8n.pt')

model.train(data='./data.yaml', epochs=2, batch=1, device='0', imgsz=640, workers=2, cache=False,

amp=True, mosaic=False, project='runs/train', name='exp')

按照上述步骤修改后,即可开始训练模型。

相关改进思路

FEM模块可以替换C2f、C3模块中的BottleNeck部分。具体的代码实现和自研模块的融合方法可以在相关群文件中找到。

热门推荐

清热泻火、生津止渴!良药“天花粉”了解一下!

母胎健康管理包括哪些项目

镁合金 vs 铝合金:自行车材质的较量

200度近视眼怎么慢慢恢复视力?

初级会计师能从事哪些岗位工作?附职业发展路线

一盒牛奶的热量是多少?不同牛奶的热量和营养全解析

心情低落烦躁压抑怎么缓解

摩托车刹车片的安装步骤是什么?安装后如何进行调试?

公司开户行注销需要什么?警惕空壳公司走账风险

什么主板支持3200频率的内存

试用期转正时可能会受到的处分:影响因素及应对策略

从推动家装厨卫“焕新”看万华生态集团的“信阳实践”

清热泻火、生津止渴!良药“天花粉”了解一下!

Nature重磅:饭吃“六分饱”和间歇性禁食能延寿,但是要付出代价的

网络工程师职业简介和职业前景

18K还是24K?黄金戒指选购与鉴别全攻略

尼日尔政变:法国彻底失去在西非的影响力了吗?

先期产品质量策划(APQP)

部队智慧食堂:革新饮食保障,助力强军征程

黄德华:用AI提升社区居民生活品质

阿根廷马黛茶的多种功效与全面指南:从健康益处到饮用方法

小米为何能拿到最后一个造车资质?

音乐人想成功,必须先当网红?

28岁网红"阿浩"因病离世!从确诊到去世仅5个月,死前留下忠告

选择适合自己的英文名:反映个性与文化的全新体验

“在美国,血液意味着大买卖”——起底美国血液产业利益链逻辑

李白的诗风:飘逸、豪放与深情并蓄的独特艺术魅力

小红书如何添加新话题——一步步轻松搞定

夏枯草:传统中药材的多重功效与应用

面瘫患者使用甲钴胺的治疗时长指南